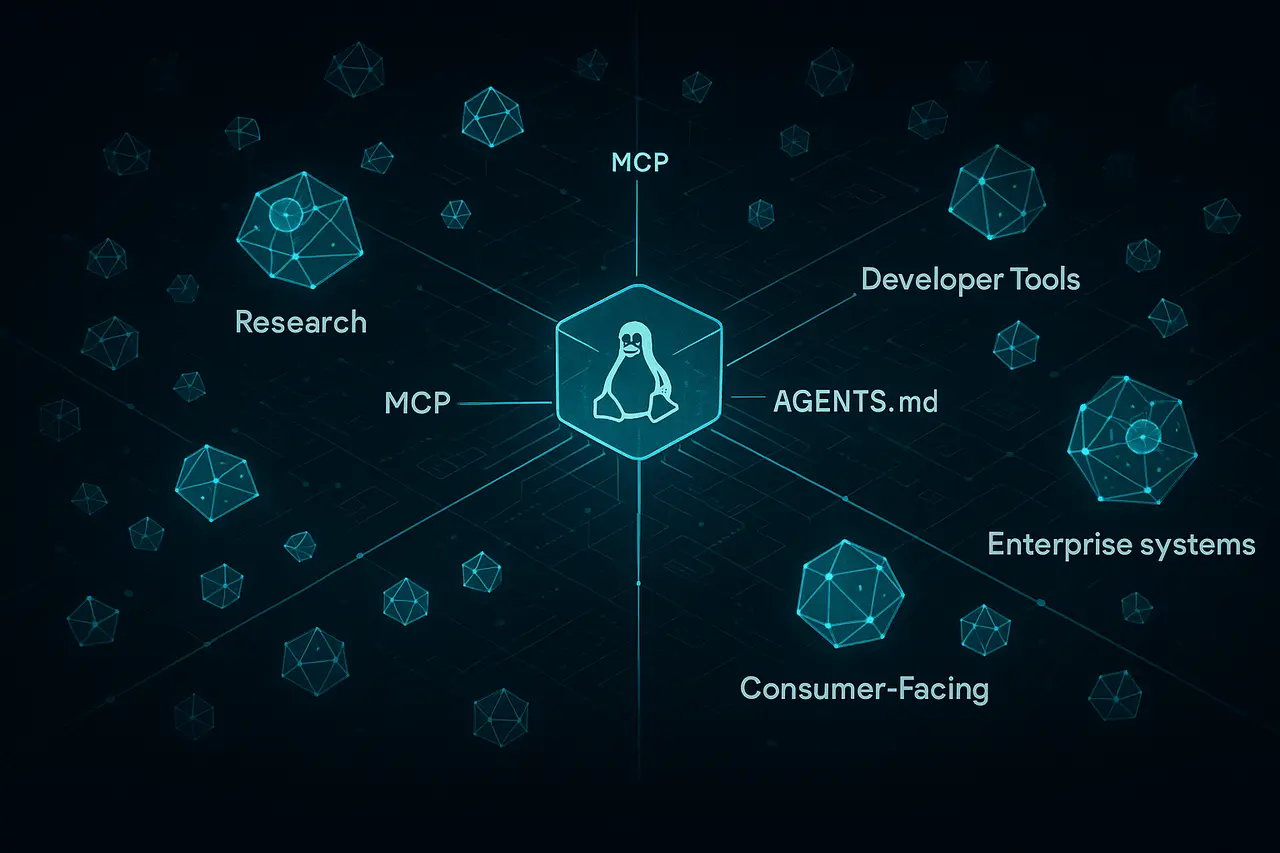

Scale AI has introduced SWE-Atlas, a new benchmark designed to measure how AI coders can complete complex computer engineering tasks in a real-world development environment. The SWE-Atlas benchmark extends the previous SWE-Bench Pro framework by assessing capabilities beyond code-change accuracy, focusing on more complex engineering skills, such as comprehending large codebases, writing tests, and refactoring software.

The first assessment released under the SWE Atlas Codebase QnA is a test of the efficiency with which AI agents can analyse real software repositories using runtime execution and multi-file logic. Initial results show that even the most advanced AI models struggle with these tasks, highlighting a significant gap between current coding assistants and the true self-contained capability in software engineering.

What Is SWE-Atlas?

The SWE-Atlas benchmark is an evaluation framework developed by Scale AI to measure the real-world capabilities of AI programming agents.

Traditional benchmarks usually focus on specific tasks, like creating code snippets or fixing minor bugs. SWE-Atlas , instead, examines larger engineering workflows similar to those humans face when working with huge code bases.

The framework offers a variety of testing tracks, each targeting a specific software engineering skill.

Core Evaluation Categories

SWE-Atlas comprises three benchmark categories that are the main ones:

| Evaluation Category | What It Measures |

|---|---|

| Codebase QnA | How well AI agents understand complex codebases through runtime analysis and multi-file reasoning |

| Test Writing | Ability to generate meaningful tests that improve code coverage |

| Refactoring | Capability to restructure code while preserving functionality |

These are changes in how the AI industry assesses the progress of AI programming agents and the automation of software engineers.

Codebase QnA: Testing Deep Code Understanding

The Codebase QnA benchmark evaluates whether AI agents can analyse and make sense of large software systems.

Contrary to the traditional benchmarks for code, the tasks require the individuals to

- Run software inside a containerised environment

- Trace program execution

- Examine interactions across multiple files

- Explain in detail how the system functions

Agents are placed within the Docker environment, which contains a genuine open-source repository. They must also solve complex technical questions about the system.

These types of questions require more than one step of thought and active investigation, rather than a simple code search.

Benchmark Structure

The evaluation currently comprises:

- 124 tasks

- 11 production-grade repositories

- 4 programming languages

- Python

- Go

- C

- TypeScript

Repositories are systems like:

- mail servers

- platforms for observability

- object storage tools

- terminal emulators

These projects are realistic representations of engineering complexity rather than simple coding problems.

Early Results Reveal Major Limitations in AI Coding Agents

First results from the SWE Atlas benchmark indicate that current AI models struggle to develop a deep understanding of the codebase.

Even the best-performing models had fairly low pass rates.

| Model | Codebase QnA Pass Rate |

|---|---|

| Claude Opus 4.6 | ~31.5% |

| GPT-5.2 | ~29% |

| GPT-5.3 Codex | ~27% |

The most effective models can solve only one-third of the problems, suggesting that large language models have significant difficulty with complex engineering workflows.

This differs from older benchmarks such as SWE-Bench, where some systems achieved significantly higher success rates.

The distinction highlights that actual software engineering requires more than simply generating patched code.

Why SWE-Atlas Represents a Shift in AI Evaluation?

The launch of SWE-Atlas has resulted from a growing realisation within the AI community that the old benchmarks for programming are too narrow.

The majority of evaluations currently measure the extent to which an AI model can generate the correct code changes, which are confirmed by computer-generated tests.

But real software engineering is a greater set of capabilities that include:

- Understanding system architecture

- Investigating runtime behaviour

- Finding the root cause of bugs

- Designing test cases

- Refactoring complex code structures

SWE-Atlas seeks to define these larger capabilities.

Rubric-Based Evaluation

One of the major changes involves the usage of rubric-based scoring systems instead of solely automated testing verification.

This technique allows for the assessment of tasks that can’t be evaluated solely using unit tests, like:

- analysis of system design

- explaining program behaviour

- identifying architectural dependencies

The technique also incorporates the human-in-the-loop process of creation, in which experts in the field design complex instructions based on real repositories.

Implications for AI Coding Assistants and Developer Tools

The findings from SWE-Atlas suggest that AI programming assistants need to be improved before they can function as entirely independent Software engineers.

The test also reveals areas where AI tools are advancing rapidly.

Key Capabilities Being Tested

The framework is focused on the capabilities that are essential to the next generation of developer tools:

- Codebase comprehension

- Reasoning by agents across file

- runtime debugging

- automated testing generation

- large-scale refactoring

These skills are becoming crucial in AI-powered agents that integrate into the development environment, like:

- AI coding assistants

- autonomous development agents

- code review automation tools

Companies developing AI development platforms are likely to use benchmarks such as SWE-Atlas to gauge the real-world performance of agents.

SWE-Atlas vs SWE-Bench Pro

The benchmark builds upon Scale AI’s SWE-Bench Pro test. However, the scope of the benchmark is substantially expanded.

| Feature | SWE-Bench Pro | SWE-Atlas |

|---|---|---|

| Primary Task | Code patch generation | Full software engineering workflows |

| Evaluation Method | Test-based verification | Rubric + reasoning evaluation |

| Focus | Bug fixes | Code understanding, testing, refactoring |

| Environment | Static tasks | Interactive runtime analysis |

By focusing on interactive software engineering workflows, SWE-Atlas is designed to resemble real-world development environments better.

What Comes Next for SWE-Atlas?

Scale AI plans to publish additional benchmark results beyond the initial Codebase QnA assessment.

In the coming evaluations, we will be able to measure:

- Test writing generated by AI

- automated code refactoring

Together, these benchmarks are designed to provide a comprehensive system for evaluating the performance of AI-based software engineers.

In the future, as AI models are increasingly incorporated into the development process, the use of standard benchmarks will play a crucial role in tracking the pace of progress.

My Final Thoughts

The introduction of the SWE Atlas benchmark is an important step towards a more realistic assessment of AI programming agents. By focusing on deep code comprehension, runtime analysis, and reasoning across multiple files, it exposes the gap between existing languages and the intricate workflows used by professional developers.

The preliminary results suggest that the most modern AI models struggle to tackle massive task-related software engineering. However, the benchmark gives a better understanding of the best ways to go about developing AI software development.

In the future, as AI agents evolve from simple coding assistants to more self-sufficient systems, benchmarks like SWE-Atlas will play an essential role in assessing progress and shaping the future of AI-powered engineering software.

FAQs

1. What is the SWE Atlas benchmark?

Scale AI created the SWE-Atlas benchmark to evaluate how AI programming agents can handle real-world software engineering tasks, such as analysing large codebases, writing tests, and Refactoring software.

2. What makes SWE-Atlas different from SWE-Bench?

SWE-Atlas goes far beyond bug fixes and analyses to broader engineering workflows, such as runtimes, codebase analysis, and architectural understanding.

3. How does Codebase perform its QnA evaluation?

The Codebase QnA benchmark is the first benchmark released under SWE-Atlas. It tests whether AI agents can understand complex repositories and respond to technical questions about how the software operates.

4. What is the performance of current AI models with SWE-Atlas?

Initial results show that high-quality AI models achieve only around 30% resolution for tasks, suggesting the need for improvements in the deep understanding of code.

5. Why are benchmarks like SWE Atlas crucial?

Benchmarks allow researchers to measure the development of AI programming agents and to find gaps between existing AI systems and the human-level capabilities of software engineering.

6. What are the tasks that future SWE-Atlas benchmarks assess?

The next tests will include writing tests and code refactoring , which will broaden the test to include additional real-world software engineering skills.

Also Read –

GPT-5.4 Launches with 1M Context Window and Computer Use