MiniMax is introducing MiniMax M2.7, a brand-new AI model designed to self-evolve capabilities and deliver high performance on benchmarks for engineering software. MiniMax M2.7 scored 56.22 percent on the SWE-Bench Pro, a popular test used to evaluate tasks in real-world programming, and is now available via an API and integration with MiniMax’s platform for agents. The release demonstrates a steady shift towards AI agents that can enhance their development processes, an important step forward in the field of applied AI engineering.

What Is MiniMax M2.7?

MiniMax M2.7 is a self-evolving AI model designed to support complex, agent-based workflows, especially in software engineering and automation.

Contrary to conventional large-language models, which rely upon static instruction, M2.7 is designed to:

- Improve outputs with feedback loops that iterate

- Assist in developing and improving agent systems

- Function as an element of automation pipelines with multiple steps

This makes the model more akin to autonomous AI agents than to traditional prompt-response technology.

SWE-Bench Pro Performance Signals Real-World Capability

One of the major features of the release is M2.7’s 56.22% score on the SWE Bench Pro.

What is SWE-Bench Pro?

SWE-Bench Pro assesses the extent to which AI models can:

- Fix real GitHub issues

- Navigate large codebases

- Produce working, test-passing solutions

Why It Matters?

A score of more than 50% indicates that MiniMax M2.7 can handle real-world engineering work, not just theoretical code prompts.

| Metric | MiniMax M2.7 |

|---|---|

| SWE-Bench Pro Score | 56.22% |

| Task Type | Real-world software engineering |

| Evaluation Focus | Bug fixing, repo navigation |

This puts M2.7 within a growing category of models optimized to improve developer productivity and AI-assisted coding workflows.

Built Using Its Own Agent Framework

One of the most notable aspects of MiniMax M2.7 is that it was used in its individual creation process to produce intricate harnesses for agents.

What does this mean?

- The model took part in the creation of its own tooling ecosystem

- It was tested in real-world agent orchestration scenarios

- It shows the earliest forms of self-reinforcing AI loops of development

This is a reflection of an overall trend in AI research in which models are being used more frequently to:

- Generate training data

- Improve evaluation pipelines

- Optimize system-level architectures

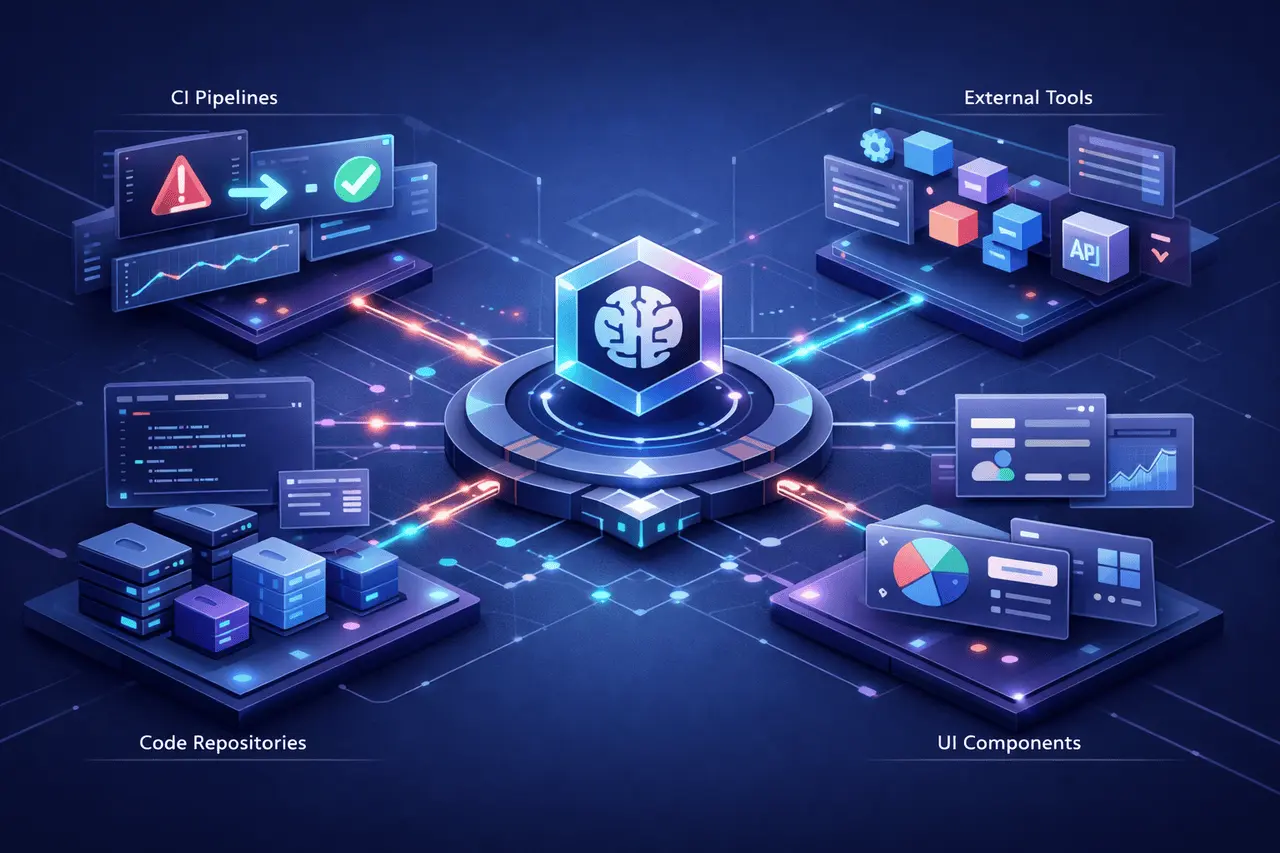

MiniMax Agent Integration Expands Practical Use

MiniMax made M2.7 accessible via:

- APIs for developers

- Integration with the MiniMax Agent platform

Key Capabilities Through Agent Integration

- Multi-step task execution

- Use of tools and orchestration

- Autonomous debugging workflows

- Iterative code refinement

Developers can build AI-powered automation systems that go far beyond one-time instructions.

Key Capabilities of MiniMax M2.7

1. Self-Evolving Learning Loops

M2.7 can improve outputs through repeated cycles of correction and evaluation, thereby improving performance over time.

2. Agent-Based Architecture Support

Created to work with the AI frameworks for agents, which allow task decomposition as well as tool usage.

3. Strong Coding and Engineering Focus

optimized for

- Debugging

- Code generation

- Repository-level reasoning

4. Scalable API Access

Developers can incorporate the model in applications, automation tools, and enterprise workflows.

How Does It Compare to Traditional AI Models?

| Feature | Traditional LLMs | MiniMax M2.7 |

|---|---|---|

| Learning Approach | Static training | Iterative, self-evolving |

| Task Execution | Single-step prompts | Multi-step agent workflows |

| Coding Ability | Prompt-based coding | Real-world repo interaction |

| Development Role | Assistant | Active system builder |

This contrast demonstrates the transition from AI assistance systems to AI systems that participate in the development process.

Why MiniMax M2.7 Matters for the AI Industry?

The release highlights various important trends in the industry:

Rise of AI Agents

AI is moving towards automated systems capable of planning, executing, and enhancing tasks without human input.

Focus on Real-World Benchmarks

Benchmarks such as SWE-Bench Pro are designed to be practical and user-friendly, not just theoretical performance.

Self-Improving AI Systems

The idea of models that contribute to their own development indicates progress towards improving recursive loops.

Practical Use Cases

MiniMax M2.7 can be used across several domains:

For Developers

- Automated fix for bugs

- Codebase refactoring

- CI/CD pipeline assistance

For Businesses

- Workflow automation

- Internal tooling development

- AI-driven engineering productivity

For AI Researchers

- Agent system experimentation

- Multi-step reasoning research

- Model evaluation frameworks

Limitations and Considerations

Although it is promising, the model raises issues of practicality:

- Reliability in more complex jobs is dependent on the supervision

- Agent systems may bring more computational costs.

- Real-world deployment requires robust evaluation pipelines.

As with other AI models, it is crucial to integrate them properly.

My Final Thoughts

MiniMax M2.7 represents a move towards self-evolving AI systems that actively contribute to the development workflows. Combining SWE-Bench Pro’s powerful performance with an agent-based architecture and API accessibility, the model demonstrates how AI is evolving from static assistants to autonomous, dynamic systems.

As AI agents improve their capabilities and become more efficient, tools such as M2.7 could play a major role in technology automation, engineering, corporate AI adoption, and the transition to AI-powered systems.

FAQs

1. What is MiniMax M2.7?

MiniMax M2.7 is a self-evolving AI model that was designed to support the use of agents in software engineering and workflow tasks.

2. What exactly does “self-evolving” mean in AI models?

It is a reference to the model’s capability to enhance outputs through feedback loops and multi-step methods.

3. What is SWE-Bench Pro?

The SWE Bench Pro benchmark, which tests AI models’ ability to solve real-world software engineering problems, uses a GitHub repository.

4. How do developers gain access to MiniMax M2.7?

Developers can access APIs and the MiniMax Agent platform to build automated workflows.

5. What is it that makes MiniMax M2.7 Different from Other AI models?

Its emphasis on the self-improvement of loops and on agent-based implementation sets it apart from traditional AI systems that use prompts.

6. MiniMax M2.7 is appropriate for use in production?

It can be used in production settings but requires proper testing, monitoring, and validation, as with any other artificial intelligence system.

Also Read –

MiniMax MaxClaw AI Agent: Instant Always-On Automation

MiniMax API Keys Explained: Coding Plan vs General API