WarpGrep V2 is a specially designed subagent for code search created by Morph that enhances large language model (LLM) performance by separating reasoning from search. By keeping the model’s environment clean and delegating search tasks to an efficient and parallel agent, WarpGrep 2.0 delivers top-of-the-line results with SWE-Bench Pro.

This shift in architecture addresses a major issue in advanced AI systems: context overload. As models grow to 100k-1M tokens, performance degrades when search noise overwhelms working memory. WarpGrep 2.0 eliminates this bottleneck.

What is WarpGrep V2?

WarpGrep v2 is a specific search subagent specifically designed to:

- Multi-repository code search

- Package search

- Log search

- Parallelized code retrieval

Instead of letting the primary model search repositories line by line within its window, WarpGrep runs independently. It is fast in its search and provides only relevant information.

This division enhances efficiency, performance, and response speed.

Why Splitting Code Search Improves AI Performance?

The Context Overload Problem

Modern foundation models can handle huge context windows. However:

- Performance often drops beyond 100k tokens

- A larger context can increase the latency

- The excess content in search lowers the quality of reasoning

Even the million-token version suffers slowdowns when loaded with ineffective codes and logs.

WarpGrep v2 stops this by isolating search-related tasks.

How WarpGrep v2 Works?

Clean Context Architecture

The structure of the system is based on two agents:

- Main Agent

- Performs reasoning

- Writes patches

- Executes tool calls

- Handles high-level logic

- WarpGrep Subagent

- Performs parallel search

- Scans logs, repositories, and logs

- Only returns relevant content

- Eliminates the need to pollute the main purpose

It ensures that the main agent sees only the filtered, actionable results.

Parallel Search Design

WarpGrep executes searches in parallel across:

- Local repositories

- Multiple GitHub URLs

- Code + dependency packages

- System logs

It speeds up retrieval and reduces token usage in the logic model.

Performance Gains on SWE-Bench Pro

WarpGrep version 2 has reached the top position in SWE-Bench Pro when paired with several top models.

Performance Comparison Table

| Model | Without WarpGrep | With WarpGrep v2 | Improvement |

|---|---|---|---|

| Opus 4.6 (high) | 55.4 | 57.5 | +2.1 |

| GPT-5.3 Codex (high) | 56.0 | 59.1 | +3.1 |

| MiniMax 2.5 | 55.4 | 57.6 | +3.7 |

The benefits are similar across all architectures, showing that splitting search is beneficial to all types of models.

Notably, performance improvements range from +2.1 to +3.7 points, a significant gain in benchmarking environments where incremental gains aren’t easy to achieve.

Essential Technical Insights taken from the Morph’s Training Research

Morph-trained WarpGrep Version 2 has more than 15000+ hours of B200 graphics. The findings reveal important lessons about AI agent design.

1. Reinforcement Learning Tradeoffs

The application of reinforcement learning (RL) to parallel tool formats led to regressions in the normal tools used sequentially.

It underscores the difficulty of maximizing coordination between multiple agents without compromising the basic capabilities.

2. Task Decomposition Works — Selectively

Not all tasks profit from being split into subagents.

Certain systems improve dramatically when they are isolated, while Others don’t. The decision of which processes to separate is crucial.

3. Evaluation Integrity Challenges

To stop models from exploiting the history of Git or web searches to “cheat” evaluations proved difficult.

It highlights the importance of a solid benchmarking design.

4. Training Cost Is High

The development of reliable search agents in parallel requires a significant investment in computing. Effective architecture selections are vital.

Feature Expansion in WarpGrep v2

WarpGrep Version 2 is no longer an optimization layer that benchmarks. It’s now a general-purpose subagent for searching.

Core Capabilities

- Multi-repo search that is fast (local and numerous GitHub URLs)

- Package search and code

- High-speed log search

- Parallel execution architecture

These characteristics make it appropriate for:

- AI coding agents

- DevOps automation

- CI/CD debugging workflows

- Multi-repository Enterprise Systems

Traditional Approach vs Subagent Architecture

| Traditional Integrated Search | WarpGrep Subagent Model |

|---|---|

| Search performed inside main context | Search handled externally |

| High token consumption | Minimal token footprint |

| Context pollution | Clean reasoning context |

| Slower large-repo scans | Parallel fast search |

| Performance drops at large contexts | Stable reasoning quality |

The distinction is purely architectural and not incremental.

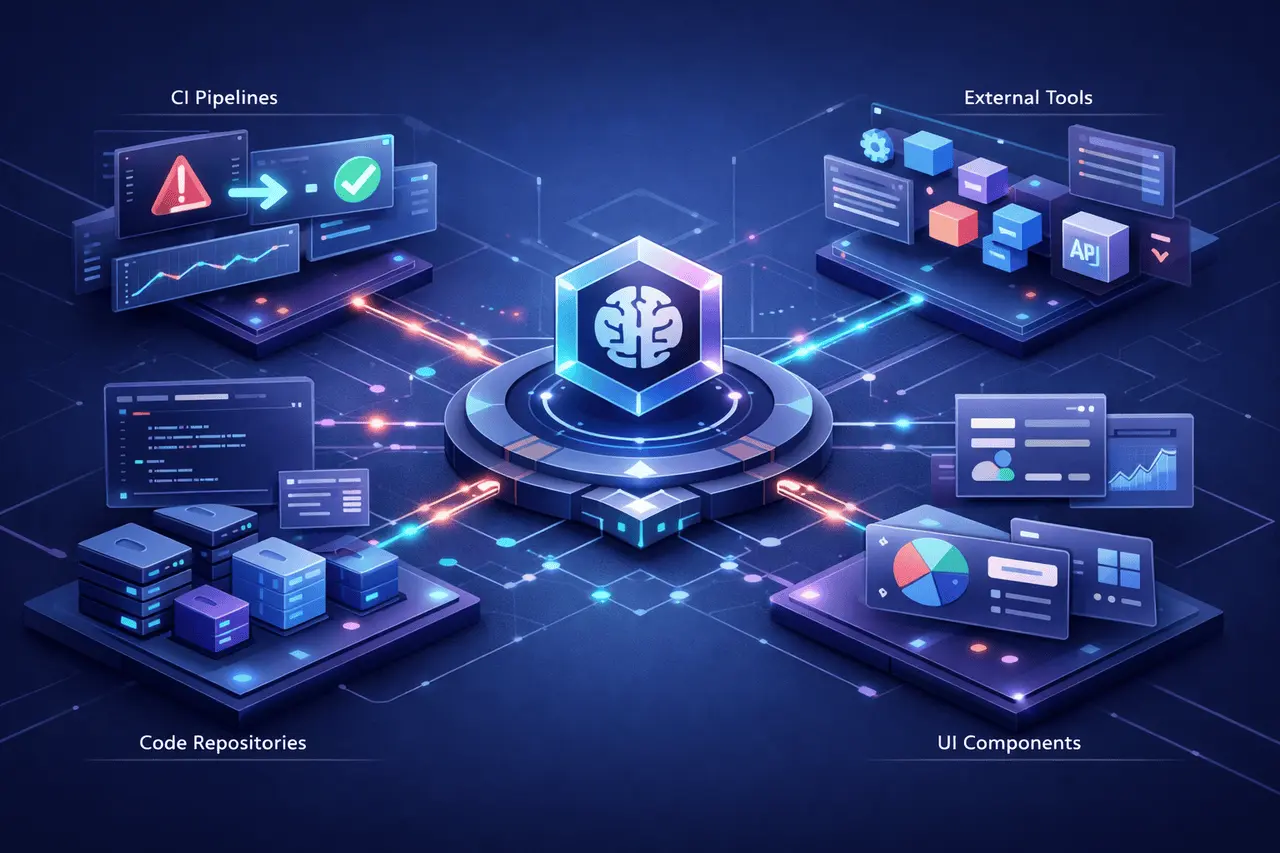

Why This Matters for AI Coding Agents?

Advanced AI Coding systems are increasingly relying on:

- Tool usage

- Multi-step reasoning

- Big repository analysis

- Long-context models

However, having a larger context doesn’t mean better reasoning.

WarpGrep Version 2 is proof that:

- Modular design improves benchmark performance

- Specialized models perform better than monolithic approaches

- Clean context windows preserve the quality of reasoning

This aligns with wider trends towards multi-agent AI systems rather than one-off, all-in-one models.

Benefits of WarpGrep v2

- Higher SWE-Bench Pro performance

- Lower context token usage

- Faster repository search

- Cost efficiency improved

- Scalable multi-repo handling

Limitations and Practical Considerations

Despite strong results, several realities remain:

- Specialized agents’ training is computationally intensive

- Optimization of RL across different tool formats is a complex process

- Task decomposition should be a selective process

- Security for evaluation is required

WarpGrep’s structure enhances performance, but it can’t eliminate the fundamental trade-offs inherent in AI design.

How WarpGrep 2 fits into the future of AI Systems?

As AI coders advance and become more sophisticated, separation of the concerns is likely to become the norm:

- Search agents

- Reasoning agents

- Planning agents

- Execution agents

WarpGrep v2 shows that splitting the search across a dedicated subagent can yield tangible gains in performance, cost, and speed.

Instead of expanding context windows forever, optimizing how context is used could be the best approach.

My Final Thoughts

WarpGrep v2 shows that specialized code search subagents can significantly improve AI benchmarks for code, such as SWE-Bench Pro. By dissociating search from reasoning, Morph achieved measurable gains across a variety of models while also reducing the impact of context.

The most important insight is the architectural aspect: scaling context alone is not enough. Modular, clean systems that effectively delegate tasks surpass monolithic designs.

As AI systems become more sophisticated and complex, structured multi-agent solutions such as WarpGrep V2 could define an era of next-generation high-performance code assistants.

FAQs

1. What exactly is WarpGrep version 2?

WarpGrep Version 2 is a specially designed subagent that searches for code generated by Morph to enhance AI modeling performance by detaching searches that require reasoning.

2. What is the best way to make WarpGrep 2.0 improve SWE-Bench Pro scores?

It avoids overloading the context by running a parallel search externally and returning only relevant fragments to the main logic model.

3. Does WarpGrep work with all AI models?

Benchmarks show improvements across a variety of models, including Opus 4.6, GPT-5.3 Codex, and MiniMax 2.5.

4. Why don’t we just increase the context size rather than splitting it into two?

Large windows in context can degrade reasoning performance when they exceed certain thresholds. Splitting the search helps keep the main model’s context clear and focused.

5. Is the process of training a subagent for a search cost-prohibitive?

Yes. Morph has reported that a significant amount of GPU time was required to train WarpGrep effectively.

Also Read –

CoPaw: Open Source AI Partner with Local-First Architecture