CoPaw is now open-source, offering users complete control over their AI companion. Built on a revamped technology, CoPaw focuses on model freedom, local-first deployment, and a modular architecture with multichannel connectivity. It allows users and developers to run AI models locally, integrate with custom-built providers, and create modular workflows for agents without locking in vendors.

This trend towards greater transparency aligns with the growing need for privacy-oriented AI assistants, programmable agent frameworks, and cloud-based and hybrid cloud architectures.

What Is CoPaw?

CoPaw is a flexible, personalised work created designed for both local and cloud environments. It lets users:

- Run large language models (LLMs) locally

- Integrate private API endpoints

- Enhance capabilities through tools and skills

- Connect AI workflows to various communication channels

Unlike closed AI assistants, CoPaw emphasises control, flexibility, and data ownership.

Why CoPaw Matters in Today’s AI Landscape?

Contemporary AI users are increasingly requiring:

- Local deployment for privacy

- Model flexibility extends beyond one provider

- Persistent memory to support continuity in context

- Cross-platform integrations

CoPaw meets these requirements by:

- Local inference engine supports native

- Modular “Lego-like” architecture

- Agentic workflows

- Built-in long-term memory, without complicated setup

This is important for power users, developers, and companies building safe AI technology.

Ultimate Model Freedom: Local-First AI Architecture

A defining feature of CoPaw is its local-first design.

Native Local Model Support

CoPaw offers all and native assistance for

- Ollama

- llama.cpp

- MLX (optimised for Apple Silicon)

This lets users run lightweight or fine-tuned models directly on their computers.

Bring Your Own Model (BYOM)

Users can:

- Add custom model providers

- Eliminate providers quickly

- Integrate private API endpoints

- Requests are routed across various models backends

This provides flexibility and eliminates dependence on a single cloud provider.

Feature Comparison Table

| Capability | Traditional AI Assistants | CoPaw |

|---|---|---|

| Local model execution | Limited or none | Native support |

| Custom API endpoints | Restricted | Fully configurable |

| Model swapping | Rarely supported | Supported |

| Data control | Vendor-managed | User-controlled |

Smarter Long-Term Memory Without Complexity

Many AI assistants struggle with session-based”amnesia.” CoPaw introduces persistent long-term memory.

Key Enhancements

- Preference tracking

- Task continuity

- Search mode for local vectors

- Windows compatibility with out-of-the-box configuration

A new, locally based mode eliminates the need for complex databases while allowing semantic memory retrieval via.

The result is that it’s appropriate for:

- Personal productivity

- Research workflows

- Task automation systems

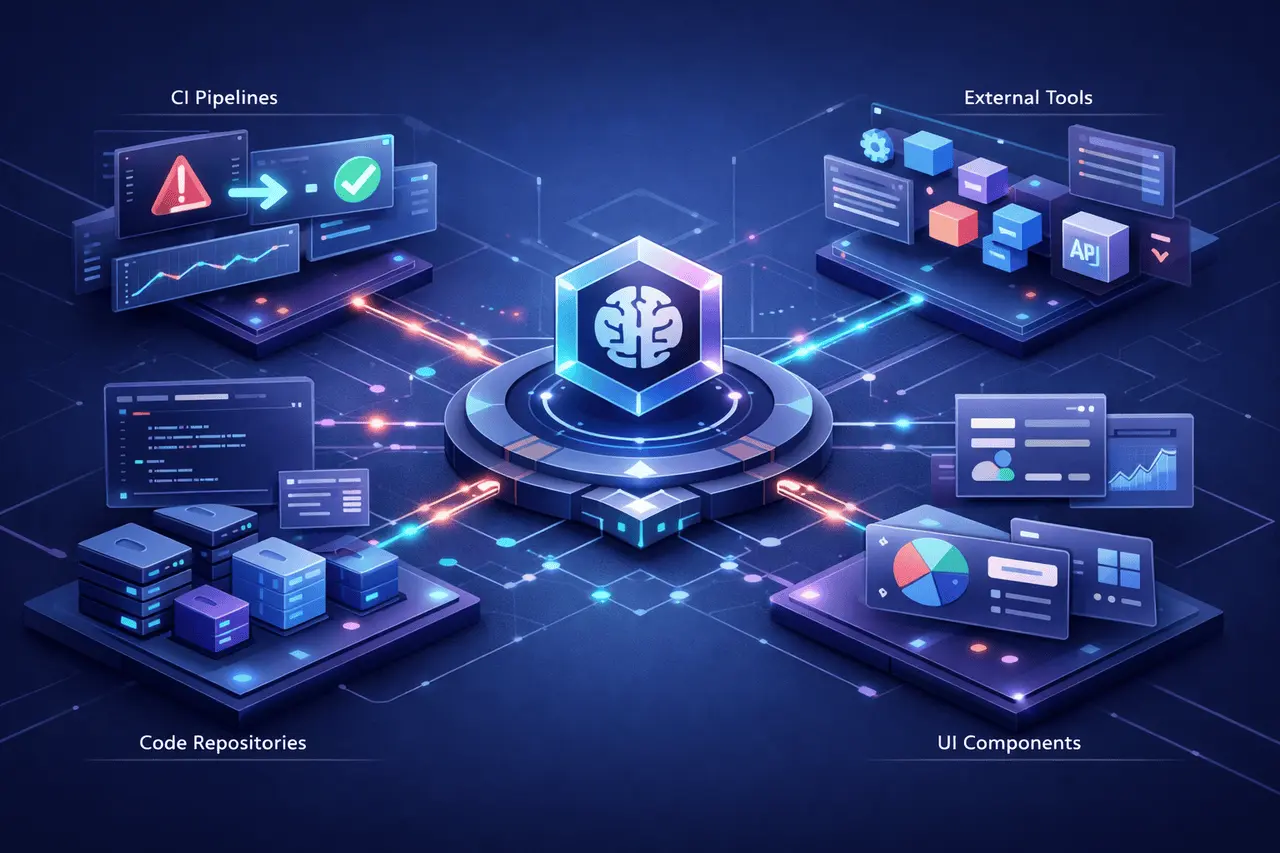

Modular “Lego-Like” Agent Architecture

CoPaw is built using modular components that enable developers to build and expand AI behaviour dynamically.

Agentic Workflow Components

- Modular prompts

- Hooks

- Tools

- Integrations of Skill

- MCP (Model Context Protocol) hot-swapping

The capability to hot-swap components without restarting improves the flexibility of development and experiments.

Skill Hub Integration

CoPaw lets you import capabilities from community hubs in one command. This lets you:

- Rapid expansion of capability

- Community-based innovation shared by the community

- Reusable automation components

This is a similar approach to the plugin ecosystems found in modern software platforms, but specifically designed to work with AI agents.

Proactive Multichannel Integration

CoPaw does not limit itself to one interface. It can be integrated with:

- DingTalk

- Feishu

- Discord

- iMessage

- Additional extensible channels

A standardised protocol simplifies the development of new channel plugins.

Use Cases by Channel

| Channel Type | Example Use Case |

|---|---|

| Messaging Apps | Automated personal assistant |

| Workplace Tools | Task coordination |

| Community Platforms | AI moderation and support |

| Mobile Messaging | Personal productivity |

This allows users to use CoPaw anywhere communication occurs.

Roadmap: What’s Next for CoPaw?

The roadmap includes several significant enhancements to boost capabilities and performance.

Multimodal Interaction

The next update will provide support for:

- Voice input/output

- Video interaction

This will allow CoPaw to function without keyboard input, increasing accessibility and practical utility.

Locally Deployable Optimised Models

Improvements planned for the future include:

- Additional training and perfecting

- Smaller LLMs optimised for local deployment

- Support for enhanced support for native skills that are essential to the core

This will increase CoPaw’s capability to function as a privacy-focused smart assistant.

Large-Small Model Collaboration

The hybrid structure is currently being developed, in which:

- Lightweight local models can handle private or sensitive tasks

- Cloud-based models that are more powerful can handle complicated reasoning

This strategy is a compromise:

- Security

- Performance

- Cost efficiency

- Computational scalability

Cloud-Native Architecture Optimisation

Integration with AgentScope Runtime is a goal to broaden:

- Cloud resource utilization

- Storage capabilities

- Ecosystem compatibility

This lets users scale CoPaw from local user use and cloud-native installations.

Benefits of CoPaw

1. Privacy Control

Local-first architecture keeps sensitive data on-device.

2. Vendor Independence

Bring-your-own-model support reduces lock-in.

3. Extensibility

Skills hubs and modular tools extend the functionality.

4. Cross-Channel Deployment

works across the collaboration and messaging platforms.

5. Persistent Memory

Long-term memory based on vectors improves the continuity of context.

Limitations and Considerations

While CoPaw is flexible, users should think about:

- Limitations on local hardware when running LLMs

- Complexity of configuration for more complex configurations

- Regular maintenance of custom integrations

- Model size and performance trade-offs in local mode

Companies that deploy at a large scale may require cloud-based optimisations to maintainmaintain performance.

Practical Applications

CoPaw is a good choice to:

- Personal AI assistants for productivity

- Developer automation workflows

- Artificial Intelligence-powered Research Assistants

- Secure internal enterprise bots

- Multichannel communication agents

Its architecture supports both hobbyist experiments and enterprise-grade AI workflows.

My Final Thoughts

CoPaw is a significant advancement in open source personal AI systems. By combining local-first deployment, a modular architecture, persistence memory, and multichannel connectivity, CoPaw provides users with complete control over the AI partner.

As AI ecosystems move towards hybrid cloud-local architectures and privacy-focused workflows, CoPaw’s model flexibility makes it an innovative solution. The roadmap of CoPaw, which includes multimodal interactions, optimised local models, and large-small model collaboration, suggests a continued increase in both capability and usability.

For those who want control, transparency, and flexible AI architectures, CoPaw offers a compelling open-source base for the upcoming Generation of AI-powered personal assistants.

Frequently Asked Questions (FAQs)

1. What is it that makes CoPaw distinct from the similar AI assistants?

CoPaw is a local-first application and modular agent architecture that lets you bring your own model, giving you greater control over data and the models you use.

2. Can CoPaw run completely offline?

Yes, when it is configured with local models supported by CoPaw, like those that run through Ollama and llama.cpp, CoPaw can operate locally without the need for cloud-based APIs.

3. Does CoPaw support multiple communication platforms?

Yes. It works with platforms like DingTalk, Feishu, Discord, and iMessage, and even supports custom-built channels.

4. What is CoPaw’s memory-based long-term feature?

It uses vector-based semantic memory to store the user’s preferences and task context across sessions, with no complex database configuration.

5. Is CoPaw appropriate for use in the enterprise?

Through its cloud-native strategy and modular architecture, CoPaw can be adapted to work in enterprise environments, particularly in areas where privacy and customisation are top priorities.

6. Can I connect my personal API endpoints in conjunction with CoPaw?

Yes. CoPaw lets you add or remove custom-designed models and provides privately accessible APIs.

Also Read –

Vibecode Android: Cross-Platform App Development